Last night my phone buzzed with an email. One line, no punctuation: "Are you free to talk it is urgent."

The sender was a client I hadn't heard from in years. Back in 2020 I built him a trading execution system — connects to Interactive Brokers, reads his strategy, fires trades automatically. The kind of software that either works quietly for years or announces its failure in a very expensive way. For five years it had been doing the former. No calls, no complaints, no news. Which in software is the best possible review.

I called him immediately.

A $20 fix for five years of software

Here's what happened. A few weeks back he got a $20 ChatGPT Plus subscription and had an idea. His trading system needed two small features — nothing dramatic, he said. A slight adjustment to how positions were displayed and a secondary filter on the entry signals. He figured: the AI can probably handle this. He had the source code. He had the AI. Why not?

I want to stop here and say: this is not an unreasonable idea. In 2025, using AI to make small modifications to existing code is completely reasonable. It works for a lot of things. I use it myself.

He shared the source code and described what he wanted. The AI came back with a few technical questions about the IB API and the strategy logic. He didn't know the answers — he's not a developer, he's a trader — so he told it: "I don't know, you're the AI, just do it and do it now."

The AI did it. He tested the first feature. It worked. I genuinely would have felt good about that too.

When it all collapsed

He kept going. Change this color. Make that number bigger. Show this column, hide that one. Make the refresh faster. A dozen prompts, maybe more — he lost count. The AI obliged every single time, immediately, confidently, with a cheerful explanation of what it had done.

Nobody told him to stop. The AI certainly didn't.

At some point he decided to test the second feature. The software didn't start. Not "it crashed with a useful error." Just: nothing. A wall of red text that meant nothing to him. He asked the AI to roll back to the last working version.

It said it couldn't.

What followed was — and I'm pausing here because this is the part I wasn't prepared for — a hallucination sprint. The AI started generating fixes. One after another. Each one confident, each one cleanly formatted, each one with a detailed explanation of what it was doing. The code got longer. The explanations got more elaborate. And at some point, the software ran again.

He tested a trade. It executed something. Wrong direction. Wrong size. Wrong parameters.

He called me.

What I actually found in the code

My fix took ten minutes. I keep the original deliverable — always do. Recompile, send it over, done. Five years of working software were never actually in danger.

But I asked him to send me what the AI had produced. I wanted to see it.

I've read about AI hallucinations. I've seen the examples online — confident wrong answers, invented citations, plausible-sounding nonsense. I thought I understood what the word meant.

Looking at this code was different.

It wasn't obviously broken. It was well-formatted. The variable names made sense. There were comments explaining the logic, and the comments sounded right. If you handed this to someone who didn't know what the original system did, they might have called it clean work.

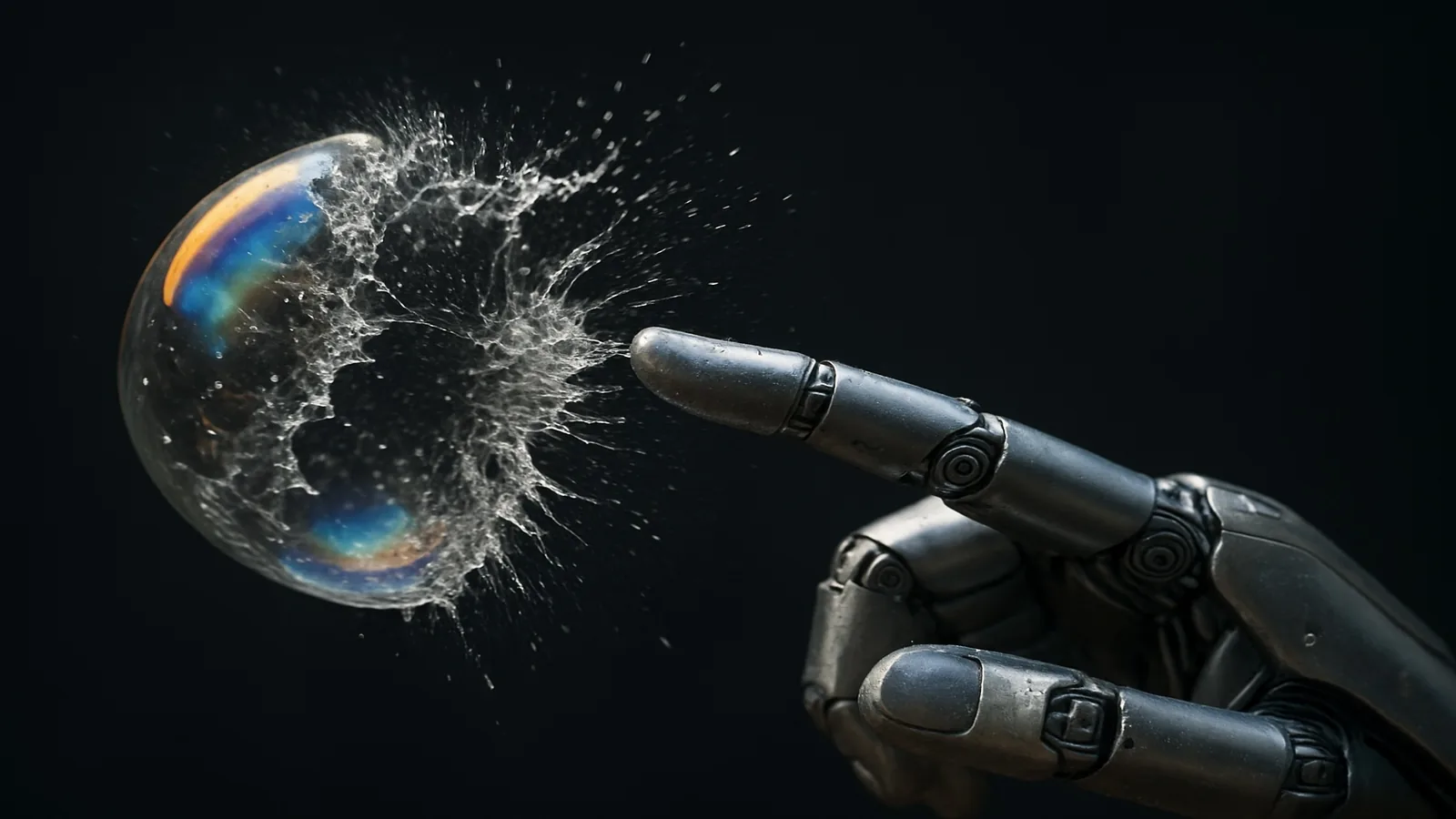

But the core trading logic — the part that determines position sizing and direction — had been quietly replaced. Not deleted. Replaced with something structurally similar but mathematically wrong. At some point in that long conversation the AI had lost the thread entirely and started filling in the gaps from pattern-matching. Not from any actual understanding of what the software was supposed to do.

The worst part: it ran. It produced output. It looked like it was working.

A $20 subscription is not a $20 developer. The dangerous part isn't the price — it's that it doesn't tell you when it's guessing.

I'm not writing this to say don't use AI for code. I use it. It's genuinely good at well-defined, self-contained problems. But there's a version of using it where you stay in the loop — you read what it produces, you test carefully, you keep a clean backup — and a version where you hand it the wheel because the first thing it did worked fine.

The first version is a useful tool. The second version is what I saw in that code.

And this was financial software. "It seemed to be working" is not a passing grade when the thing it's running is a live trading account.

💡 Honest advice: Before any AI touches production code, make sure you have a backup you can restore in ten minutes. Not a git history you'll dig through at 11pm — a clean, labeled, working copy. That's the safety net that saved five years of my client's software. The AI didn't provide it. I did.