In 2012 I was working in Bucharest for Huawei. The office had 1,200 employees — one of the largest Global Network Operations Centers in the world, responsible for running and maintaining mobile networks across Europe. Big team. Bigger responsibility. On any given day, that office was the reason your call connected in France, Germany, or the UK.

But there was another office.

The office that could survive a nuclear strike

It was a separate building — smaller, quieter, and built to a completely different standard. Earthquake-proof. Designed to withstand events that would level the main building. Same infrastructure as the main floor: same systems, same connectivity, same full ability to manage European networks from day one. It was not a backup in the backup-tape sense. It was a functional twin.

150 employees were assigned to that office. Not assigned to work there day to day — assigned to appear there. If anything disrupted the main building, those 150 people had ten minutes to be seated and operational. The networks would not go down. The calls would still connect. No customer in Europe would notice anything had happened.

They drilled for it every month. Not a fire drill — a continuity drill. Who sits where. Which system you log into first. Who calls which partner. How you hand off a critical alert when you're running between buildings in the same compound.

What the drill was really about

I had never seen anything like it, and I understood immediately why it was one of Huawei's selling points when pitching to bring a new network operator to that center. You weren't just buying operational capability. You were buying a guarantee.

The drill wasn't theater. It existed because someone had sat down and asked a serious question: what is the worst thing that can happen, and how quickly can we recover? Then they built the answer into the physical infrastructure — and practiced it until the emergency was boring.

Continuity is not a plan you write. It's a capability you build, then rehearse until the emergency is boring.

That distinction matters. A Word document titled "Business Continuity Plan.docx" that hasn't been opened since 2021 is not a continuity plan. It's a liability document.

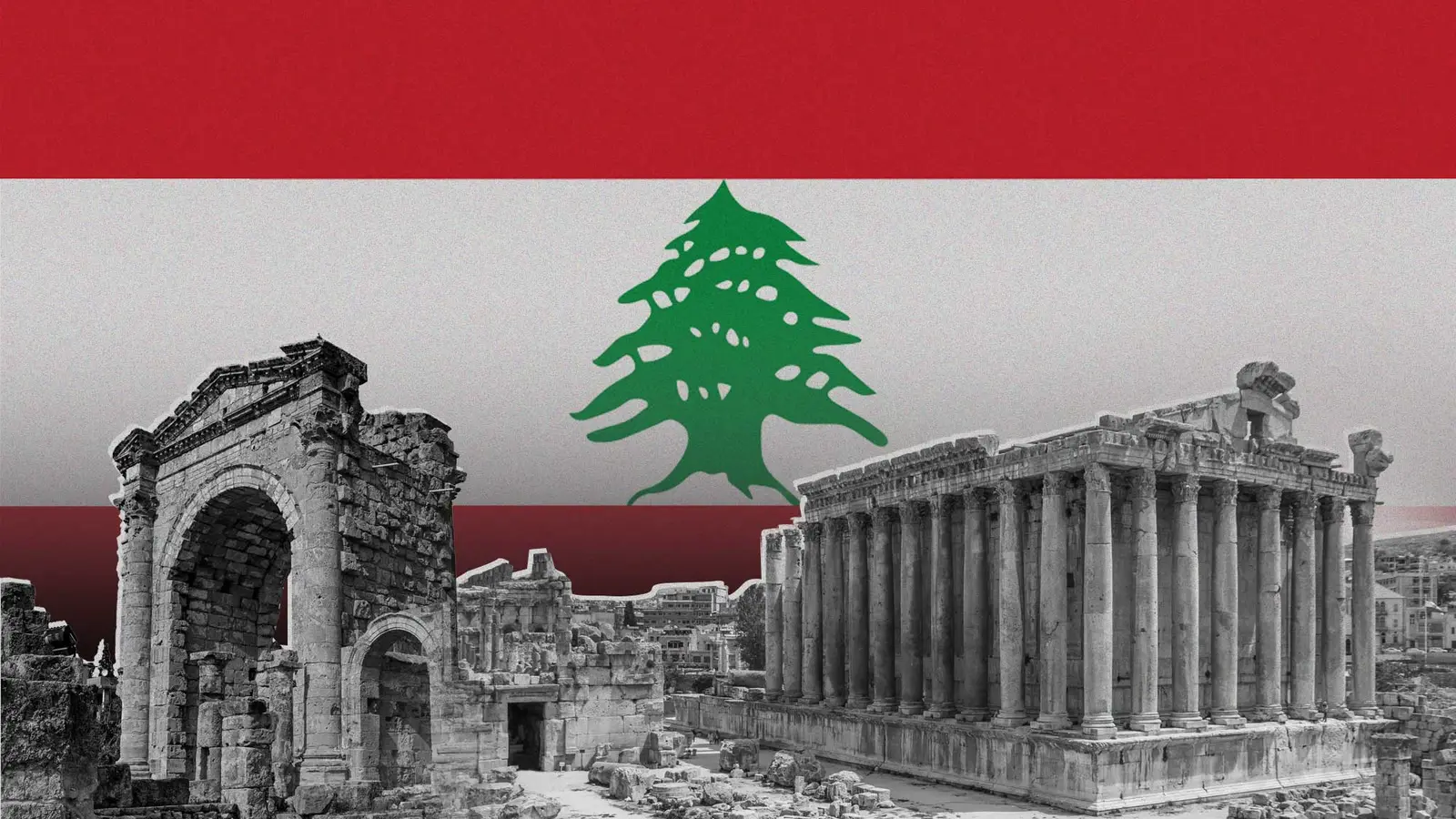

Back to Lebanon

The war has started again. As I write this, that's not a metaphor or a headline I'm reading from a safe distance — it's the region I live and work in.

So I'll ask directly: what is your continuity plan?

Not the document. Practically. If your office is inaccessible tomorrow — whether due to a strike, a power grid failure, or a building that's simply no longer reachable — what happens to your business the next morning? Can your team work? Can your clients reach you? Can your operations continue from wherever your people happen to be?

Huawei ran a 1,200-person operations center and still built a redundant office for 150 people to take over from. Not because they expected catastrophe — but because they refused to find out what happened if it came and they weren't ready. The cost of a ten-minute outage across European mobile networks would have been catastrophic. So they made that cost impossible to pay.

Your business is not running European mobile networks. But it is running someone's livelihood. Possibly your family's. Possibly your employees' families. That warrants the same seriousness.

Distributed is a decision

You don't need a bunker. The infrastructure to run a business from anywhere costs almost nothing compared to what Huawei built in Bucharest.

Cloud hosting means your data doesn't live in your building — it lives in a data center in Frankfurt or Amsterdam that has its own continuity guarantees. Remote access means your team can operate from home, from another city, from a country where the situation is stable. Documented processes mean the work doesn't freeze when one person is unreachable.

These are not complex technology projects. They're decisions you make once and maintain. The hard part isn't the implementation — it's accepting that the risk is real and deciding to address it before something forces your hand.

I've worked with Lebanese businesses that had everything on-premise. One server, one office, one person who knew the admin password. When the crisis hit in 2019, several of them spent weeks trying to recover data and reconstruct access. Not because the software failed. Because nobody had planned for the office to be unreachable.

The ones who had moved to cloud, even partially, kept running. Not perfectly. But running.

The region we work in has a higher-than-average probability of disruption. That's not pessimism — it's twenty years of observable history. Building a business here without accounting for that probability is a choice. Not a smart one.

You have the option right now, while things are calm enough to think clearly, to make a different choice.