A few weeks ago I attended a webinar run by a small Lebanese startup. Their pitch: help businesses introduce AI into their operations. I signed up for two reasons — I like seeing how other developers are thinking about AI, and honestly, it's hard not to feel good when local companies are building products. Both reasons held up. The product they were building, less so.

Here's what they're selling. An AI-ready server — real hardware, proper GPUs, the muscle to run a model locally — placed in your office. They download an open-source LLM, train it on your company's data and processes, and hand it over as your company's own dedicated AI. The security argument: in an era where nothing in digital format can be fully trusted to the cloud, this is the only way to keep your data truly yours. The price tag: $18,000 to $25,000 upfront, plus ongoing maintenance.

I frowned when I heard it. Not because of the number on the invoice.

What made me frown

The solution itself.

There is a real, meaningful gap between the open-source LLMs you can download and run locally — LLaMA, Mistral, DeepSeek, and others — and the frontier models: ChatGPT, Claude, Gemini. This is not a branding gap. It's a capability gap. The frontier models are trained on vastly more data, with far more compute, by teams of hundreds of researchers, and they are updated continuously. The open-source alternatives are genuinely impressive for what they are. They are not the same thing.

When this startup trains a local model on your company's data, they are not giving you a smarter version of GPT-4 that happens to know your company. They are giving you a weaker base model with company-specific context layered on top. That's a fundamentally different product, and most businesses making this decision don't know to ask the difference.

The math

During the Q&A I asked one question: how long before the cost of API calls to a paid model exceeds the cost of this server?

A typical Lebanese SMB using AI meaningfully — automated reports, internal queries, document processing, some workflow automation — might spend $100 to $200 a month on API calls. Generous estimate for most businesses at this stage. At $200 a month, you would need ten years of continuous use to spend $25,000. And that's before factoring in that API pricing has been consistently dropping, not rising.

The infrastructure cost, plus the maintenance contract, plus the initial training engagement — that cost structure makes sense for companies processing millions of daily requests who have documented, specific reasons they cannot use cloud APIs. Most Lebanese businesses are nowhere near that volume.

When it actually makes sense

To be fair to the concept — local LLMs do make sense in specific situations. Not common ones, but real ones.

Regulated industries with hard legal data residency requirements: certain banking operations, healthcare systems with patient data, government contracts that explicitly prohibit cloud processing. Air-gapped environments where the machine literally cannot talk to the internet. Companies already operating at a scale where they've run the numbers properly and cloud API costs are genuinely higher than self-hosting — and those companies typically have a dedicated ML team to manage the infrastructure.

There's also a legitimate argument for local models in high-sensitivity, low-volume use cases where you need deterministic control over exactly what the model does and sees. Some legal and financial workflows fit this description.

None of this describes a typical Lebanese distribution company, professional services firm, or manufacturer in 2026. For those businesses, local infrastructure is a solution looking for a problem that doesn't exist at their scale.

The obsolescence problem

This is the part that concerned me most, and it didn't come up once during the webinar.

AI is moving faster than any technology I've watched in twenty years of building software. Not faster than the smartphone era. Faster than that. New base models from the major labs ship every six to nine months, and each generation meaningfully leapfrogs the last. What was state of the art in early 2024 is already noticeably behind what's available today.

A local LLM installed in early 2026 will feel like running Windows 98 by 2028. The company that sold it to you will need to come back, charge you for an upgrade, and you'll still be behind whatever GPT-6 or Claude 5 is doing at that point.

You're not buying AI. You're buying a snapshot of AI, frozen at the moment of installation.

Cloud-based models update automatically. You use them on a Monday and they're measurably better than they were on Friday, at no extra cost and with no action on your end. The server in the corner does not do that.

What actually works

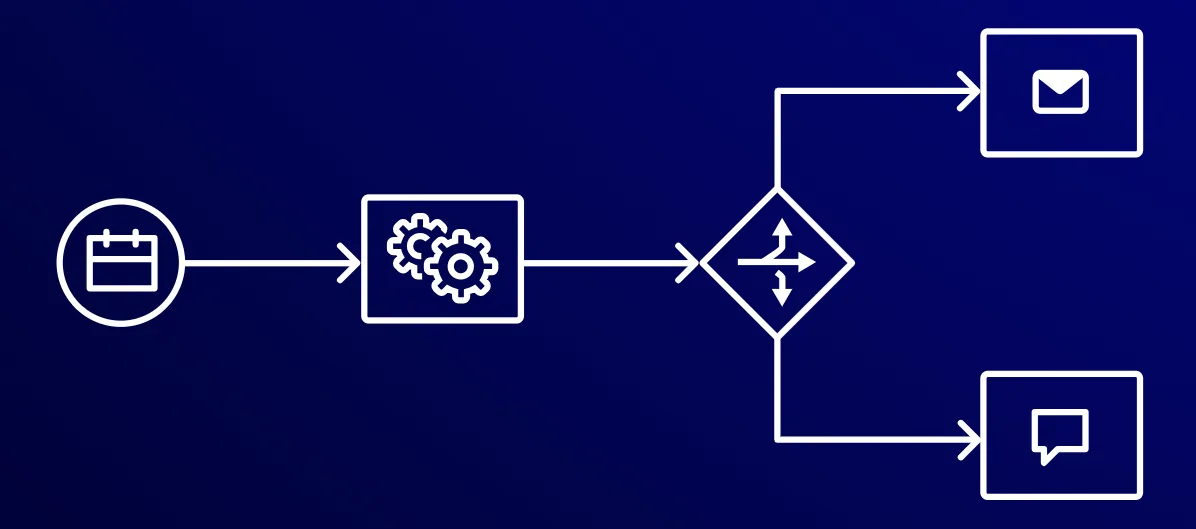

For most businesses — distribution, pharma, professional services, trading, manufacturing — the right answer is not infrastructure. It's workflow.

AI workflows and agents built on top of API calls to frontier models handle the vast majority of what businesses actually need: automated reporting, document analysis, internal knowledge retrieval, communication drafts, data extraction, inventory queries. All of it at a fraction of the cost, without hardware overhead, and with models that improve on their own schedule.

The security concern is real. But it's solvable without buying a server. You control what data you send to the API. A properly designed workflow doesn't send your raw sensitive records to the cloud — it sends structured queries and receives structured answers. Your data stays on your systems. The model does the reasoning. That's an architecture decision, not a hardware purchase.

I genuinely root for Lebanese startups building products. That hasn't changed. But the right response to a $25,000 pitch isn't excitement — it's the same question you'd ask about any major decision: what problem does this actually solve, and is this the right way to solve it?

Most of the time, with AI right now, the answer to the second part is no.

💡 First step: Before signing any contract for AI infrastructure, spend one hour mapping your actual requirements. What specific tasks do you want AI to handle? What data would those tasks need to see? That conversation — which any competent developer can have with you at no cost — will tell you whether you need a server or a few well-designed API integrations.